Apache Kafka®️ 비용 절감 방법 및 최적의 비용 설계 안내 웨비나 | 자세히 알아보려면 지금 등록하세요

Technology

Introducing Confluent Private Cloud: Cloud-Level Agility for Your Private Infrastructure

Confluent Private Cloud (CPC) is a new software package that extends Confluent’s cloud-native innovations to your private infrastructure. CPC offers an enhanced broker with up to 10x higher throughput and a new Gateway that provides network isolation and central policy enforcement without client...

Queues for Apache Kafka® Is Here: Your Guide to Getting Started in Confluent

Confluent announces the General Availability of Queues for Kafka on Confluent Cloud and Confluent Platform with Apache Kafka 4.2. This production-ready feature brings native queue semantics to Kafka through KIP-932, enabling organizations to consolidate streaming and queuing infrastructure while...

New in Confluent Intelligence: A2A, Multivariate Anomaly Detection, Vector Search for Cosmos DB, Amazon S3 Vectors, and More

Explore new Confluent Intelligence features: A2A integration, multivariate anomaly detection, vector search for Cosmos DB and S3 Vectors, Private Link, and MCP support.

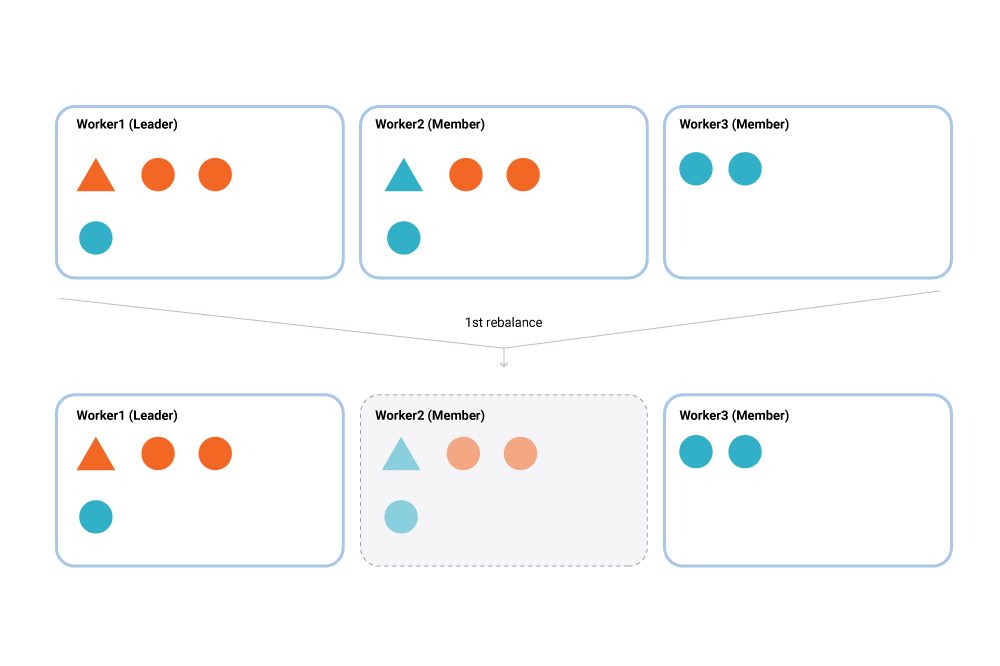

Incremental Cooperative Rebalancing in Apache Kafka: Why Stop the World When You Can Change It?

There is a coming and a going / A parting and often no—meeting again. —Franz Kafka, 1897 Load balancing and scheduling are at the heart of every distributed system, and […]

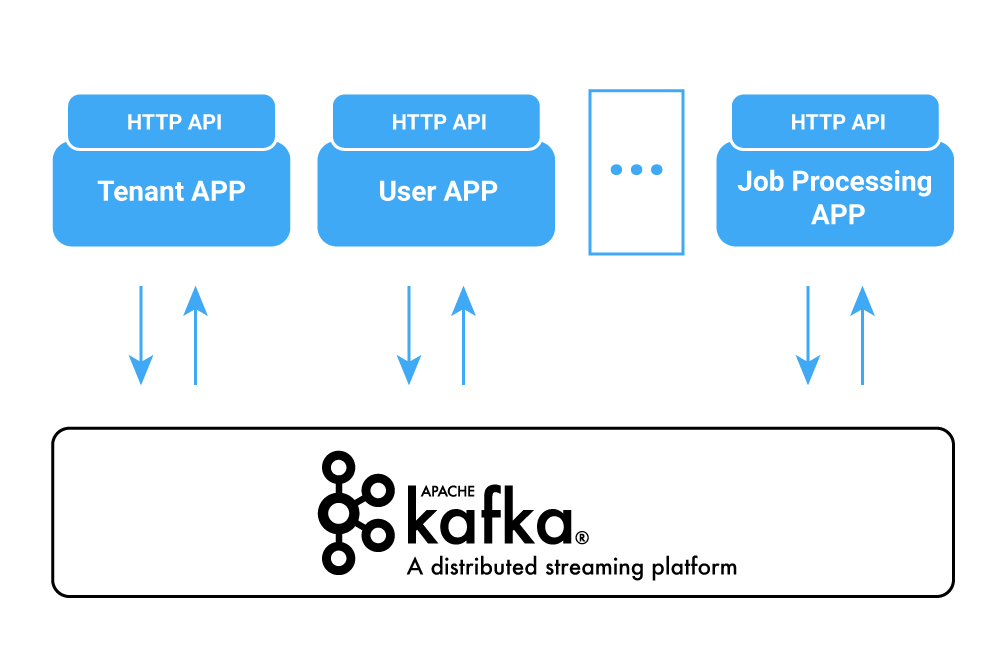

The Rise of Managed Services for Apache Kafka

As a distributed system for collecting, storing, and processing data at scale, Apache Kafka® comes with its own deployment complexities. Luckily for on-premises scenarios, a myriad of deployment options are […]

Multi-Region Clusters with Confluent Platform 5.4

Running a single Apache Kafka® cluster across multiple datacenters (DCs) is a common, yet somewhat taboo architecture. This architecture, referred to as a stretch cluster, provides several operational benefits and […]

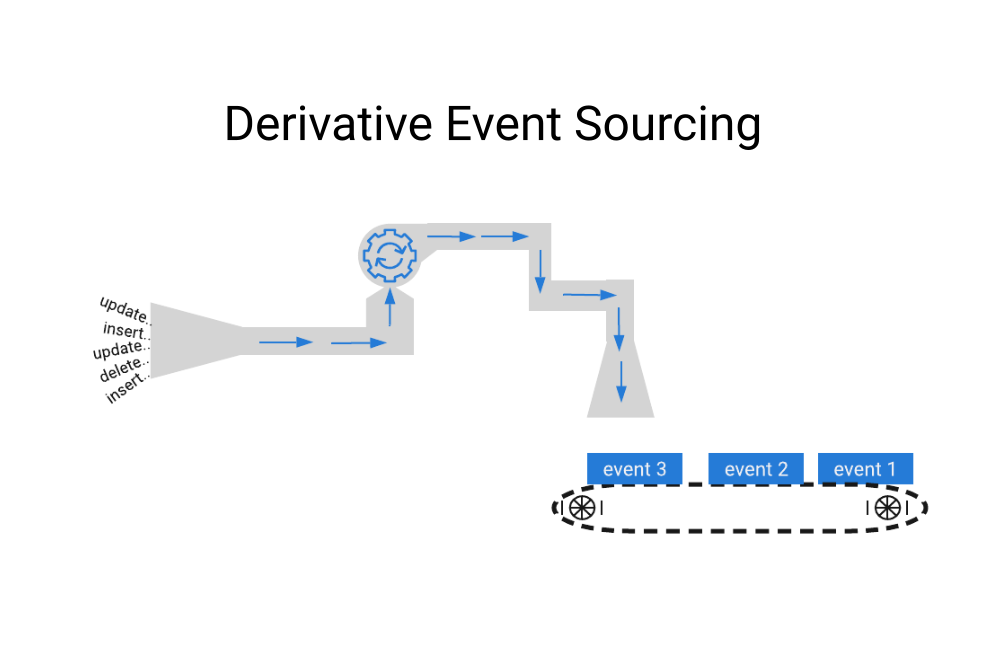

Introducing Derivative Event Sourcing

First, what is event sourcing? Here’s an example. Consider your bank account: viewing it online, the first thing you notice is often the current balance. How many of us drill […]

How to Use Schema Registry and Avro in Spring Boot Applications

TL;DR Following on from How to Work with Apache Kafka in Your Spring Boot Application, which shows how to get started with Spring Boot and Apache Kafka®, here I will […]

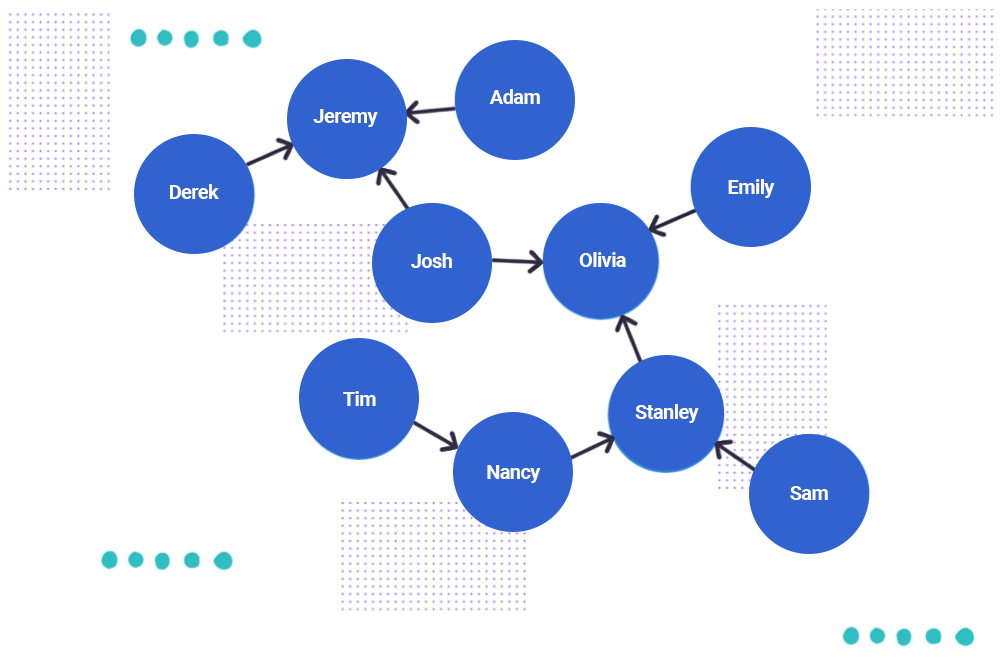

Using Graph Processing for Kafka Streams Visualizations

We know that Apache Kafka® is great when you’re dealing with streams, allowing you to conveniently look at streams as tables. Stream processing engines like ksqlDB furthermore give you the […]

Confluent Cloud Schema Registry is Now Generally Available

We are excited to announce the release of Confluent Cloud Schema Registry in general availability (GA), available in Confluent Cloud, our fully managed event streaming service based on Apache Kafka®. […]

Building Transactional Systems Using Apache Kafka

Traditional relational database systems are ubiquitous in software systems. They are surrounded by a strong ecosystem of tools, such as object-relational mappers and schema migration helpers. Relational databases also provide […]

A Guide to the Confluent Verified Integrations Program

When it comes to writing a connector, there are two things you need to know how to do: how to write the code itself, and helping the world know about […]

Kafka Connect Improvements in Apache Kafka 2.3

With the release of Apache Kafka® 2.3 and Confluent Platform 5.3 came several substantial improvements to the already awesome Kafka Connect. Not sure what Kafka Connect is or need convincing […]

Shoulder Surfers Beware: Confluent Now Provides Cross-Platform Secret Protection

Compliance requirements often dictate that services should not store secrets as cleartext in files. These secrets may include passwords, such as the values for ssl.key.password, ssl.keystore.password, and ssl.truststore.password configuration parameters […]

Announcing Tutorials for Apache Kafka

We’re excited to announce Tutorials for Apache Kafka®, a new area of our website for learning event streaming. Kafka Tutorials is a collection of common event streaming use cases, with […]

ksqlDB UDFs and UDAFs Made Easy

One of ksqlDB’s most powerful features is allowing users to build their own ksqlDB functions for processing real-time streams of data. These functions can be invoked on individual messages (user-defined […]

Building Shared State Microservices for Distributed Systems Using Kafka Streams

The Kafka Streams API boasts a number of capabilities that make it well suited for maintaining the global state of a distributed system. At Imperva, we took advantage of Kafka […]